Project Description

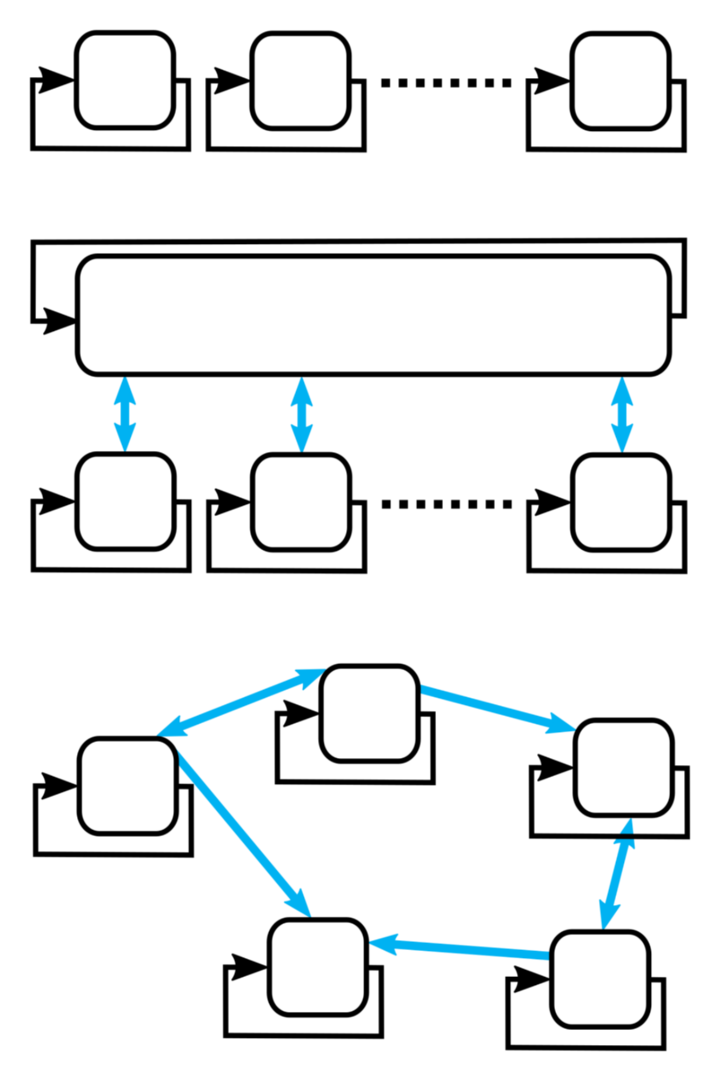

Controlling a system not with some central entity but rather with a group of interconnected controllers, that communicate over a network infrastructure, can have multiple inherent benefits. For instance, it may allow a simple expandability of the system by making it possible that additional system components and controllers can be added to the control network without the necessity to redesign a complicated, central control algorithm. In a similar vein, if individual controllers or parts of the system stop operation due to a technical failure and therefore drop out of the control network, it may be possible that the remaining controllers self-reliantly compensate for the loss. Hence, the underlying task may still be accomplished as good as possible under the suboptimal circumstances. However, mechanical systems, often requiring short sampling times, can pose considerable challenges on distributed control concepts, since the available timeframe for the necessary communication and the calculation of the next control move can be particularly short

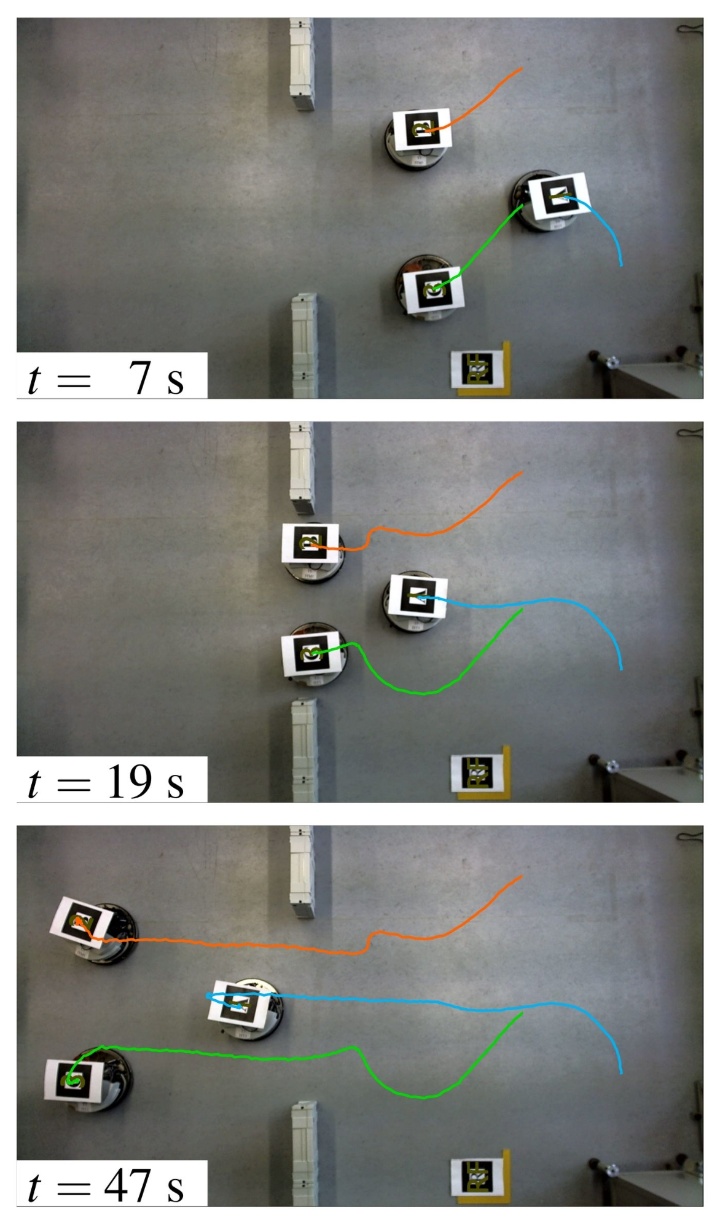

The specific use cases for distributed control concepts can be plentiful. In the focus of the institute's research in this area is the solution of complex, cooperative tasks with autonomous mobile robots that self-reliantly communicate and cooperate to enable a successful operation. The rapid technological progress with respect to the processing power of chipsets for embedded computing, the increasing availability of cost-effective and reliable sensors, and the advent of efficient, standardized wireless communication technologies continuously increases the realm of possibility in this area. In our current research interest is, for instance, the cooperative transportation of an object by a self-organized group of mobile robots. Apart from technical considerations, a successful solution requires an understanding and the application of modern control-theoretic concepts like distributed model predictive control or methods based on algebraic graph theory.

Vid. 5: Simulation of a group of robots transporting an elastic plate through an unknown environment. The constructed map can be seen on the right-hand side.

Henrik Ebel

Dr.-Ing.

Hannes Eschmann

M.Sc.